Hey u/benl5442 on Reddit (and the wider crowd watching this unfold), you threw down the gauntlet with the evolved V3.2 framework and that “probably unbeatable” prize for anyone who can prove post-WWII capitalism survives the AI cognitive dominance wipeout. Well, I agree, almost impossible to sink your battleship, but I do have a response.

Author’s note: AI was used to generate the charts and illustrations.

Preamble: A Human-Centric Reflection on the Discontinuity Thesis

The Discontinuity Thesis (in its hardened V3.2 form) presents a truly formidable, argument. Its mathematical guardrails make the case appear airtight, and the prize challenge is openly described as “tough and probably unbeatable.” I’ve read the thesis many times, multiple full passes through the core logic, so it sinks in, and while I expect my writeup to include some reaches at times, this was more for the fun of taking a truly well-written framework and trying to poke meaningful holes in it. I fully respect the thesis’s intellectual rigour. The rules I see are truly intentionally narrow, leaving little wiggle room for rebuttal. To be transparent about my approach: I used AI just a bit for quick cross-checks and to validate some of my assumptions, but the bulk draws from my own archives. I blog regularly on AI economics and salient topics, and I have literally hundreds of pages of prior research and writing to pull from. Much of this was reworking past writings and resynthesizing them for my purpose here. I also ran drafts through Grammarly so I don’t drive readers crazy with my horrible spelling (spelling only, I am too cheap for the AI version).

That said, this piece is not a formal rebuttal aiming to claim the prize. Instead, it offers an opinion rooted in something the framework deliberately sidelines: the stubborn, non-economic human element. Purpose, meaning, psychological resilience, and our innate drive to contribute and connect may not override market logic in the short term, but they could prove decisive in the long arc of societal adaptation. History shows humans do not passively accept obsolescence; we reinvent, often messily, when systems fail to align with our deeper needs. While the thesis documents a clean “death” via cognitive commoditization, I believe the human story rarely ends so neatly—post-turbulence reinvention remains possible, even probable, precisely because we refuse to be reduced to pure economic agents.

Critiquing the “Evolution Under Alternative Continuity” Framework

As a dabbler in global economics with far too many years of experience analyzing historical economic systems, their evolutions, and failures, I took up the challenge and took a stab at the challenge to find holes in your thesis.

The thesis deterministic view that post-WWII capitalism, defined narrowly as mass productive participation through human labour, will inevitably “die” due to AI’s cognitive dominance, with no viable survival through coordination, adaptation, or redistribution. You talk about schemes like UBI or AI dividends as mere “functional replacements,” akin to feudalism, and close loopholes by emphasizing that true survival requires ongoing human value creation, not just consumption support.

While the framework is rigorous and highlights real risks from AI disruption (good job, honestly), it suffers from a few flaws (Remember you asked):

Overreliance on absolutist assumptions, historical myopia, underestimation of human adaptability, and a rigid binary between “survival” and “replacement.” These holes undermine its predictive power.

In short, the thesis is sound on its own terms, but those terms exclude a dimension that may ultimately matter more than math alone, which I get to later on in this long-winded sort of rebuttal.

Dismantle the Assumptions:

The thesis suggests: “Post-WWII Capitalism is not defined merely by mass consumption capacity—it is defined by mass productive participation. The system dies when the majority of adults cannot contribute economically valuable labor, regardless of whether alternative income streams exist.”

The above interpretation is too absolute. Capitalism after World War II didn’t only work because almost everyone had a ‘productive’ job, and it hasn’t collapsed in times when lots of people were unemployed or in bad jobs. The system has kept going by using things like debt, government spending, low‑wage work in other countries, and finance. So it’s more accurate to say capitalism can adjust to many people being pushed out of good work, rather than saying it simply dies when most adults can’t contribute ‘economically valuable’ labour.

Thesis Suggests:

Key Distinction:

Functional Replacement: Mass consumption is maintained through redistributive mechanisms

System Survival: Mass productive participation where human effort creates economic value.

I see what you did here. This way of framing assumes there’s only one ‘real’ way for capitalism to survive (lots of people doing paid, value‑creating work) and treats everything else (taxes, transfers, public services, asset income, etc.) as a fake or temporary ‘replacement.’ But let’s get real, historically, capitalism has always mixed production with redistribution, credit, and state intervention, and it has repeatedly changed which groups count as ‘productively’ included. Drawing a hard line between ‘system survival’ and ‘functional replacement’ is more of a philosophical claim than an empirical one, and it ignores how messy and hybrid actual capitalist economies are.

I mean, man oh man, look at Canada and the US: governments are deeply in debt, running persistent deficits, and central banks have repeatedly used tools like quantitative easing that effectively pump new money into the system. Yet corporate profits and revenues have stayed high or even hit records during these periods, which shows that demand and business success can be propped up by state borrowing, central‑bank balance sheets, and financial mechanisms and not just by everyone having a ‘productive’ job as you claim. That makes the neat distinction between ‘real system survival’ through mass productive participation and ‘artificial’ survival through redistribution and money creation look too simplistic and moralistic, not a good description of how capitalism in Canada and the US actually operates.

And now onto the “Human Only Zones”:

2. Enhanced Falsification Conditions [FINAL]

This section has been expanded to incorporate the Boundary Collapse Clause, which addresses the claim that international coordination could preserve human economic relevance. Because AI erodes task boundaries continuously, no coordination regime can define or enforce ‘human-only zones.’

As I talk about later on in my post, legally and politically, there is already a strong bias toward keeping a human in the loop for high‑risk AI systems, precisely because responsibility and punishment have to land on a person or institution, not a GPU.

In safety‑critical domains, the direction of travel is toward more carefully engineered task boundaries, not their collapse where we separate what the AI is allowed to do, what must be checked by a human, and finally what requires explicit approval or dual control. This mirrors what we see in aviation, medicine, nuclear regulation, and now autonomous driving, where even if AI takes over most of the work, there remains a clearly named accountable party, a pilot in command, a supervising physician, a responsible engineer when things go wrong.

99.999999% Accurate: Even in a domain like self‑driving, where people talk about ‘six nines’ of safety or trailing‑edge reliability, the practical reality is that systems are still paired with human supervision and gradual rollout, because getting to that level in all conditions is extremely hard and takes years of iteration. If we demand that kind of reliability before removing humans, then full boundary collapse and fully autonomous operation across the whole economy is a long‑term, speculative possibility, not an inevitable near‑term baseline.”

And global AI governance is messy and partial, but it is not nonexistent. States already coordinate on nuclear tech, aviation safety, finance, and some digital rules, and AI is thankfully starting to follow the same pattern, with emerging international principles, expert bodies, and soft‑law norms. Elon Musk has been a big advocate for this.

I’m not claiming to have flawless, ironclad mathematical proof that ticks every single falsification box perfectly; no one has a crystal ball for the future. But I do have coherent points grounded in economic history, human psychology, real-world tech transitions, and the stubborn resilience of market systems. Below is what we will call my “unofficial rebuttal” to why the “death certificate” for post-WWII capitalism is issued way too early, and why it will reinvent itself after the turbulence of AI/UBI.

The thesis then postulates: “Why Capital Redistribution Fails the Test: The Productive Participation Requirement: A dividend system fails because it creates economic citizenship without economic agency. Recipients consume but do not produce. This is feudalism with better marketing.”

This is just plain historically wrong. This claim quietly assumes that only wage labour counts as ‘real’ economic agency and ignores how modern capitalist states already mix production and redistribution. People on dividends or public income can still start businesses, organize, vote, care for others, and choose how to spend which are all forms of economic agency. And if it’s acceptable for a wealthy minority to live off capital income, it’s arbitrary and a bit of a reach to say a broad social dividend magically becomes ‘feudalism.’ That’s an ideological preference for a certain class hierarchy, not a hard test that redistribution ‘fails.’

Okay, I must admit, I am getting bored, so I will bash out a few more, then onto my more pure humanistic view of things below: So let’s take care of the math part of the thesis, or psudeau math:

Shredding C1: Unit Cost Dominance

Let’s get real, AI isn’t guaranteed lower unit costs everywhere. High-stakes cognitive domains (medicine, law, engineering) demand 99.999% reliability plus massive upfront costs for training, liability insurance, error-proofing, and human backups. Human oversight isn’t “minimal” it’s often the bulk of the expense.

Shredding C2: Competitive Defection

Markets aren’t atomized Darwinism. Regulations, unions, public procurement, and consumer backlash routinely block “cost-minimizing” defection. Just think GDPR killing cheap data harvesting, or Boeing’s 737 MAX grounding proving safety trumps short-term savings.

Shredding C3: Coordination Failure

“No mechanism exists” is false. Aviation (ICAO standards), nuclear (IAEA), pharma (FDA/WHO), and finance (Basel accords) enforce “economically suboptimal” human-preserving rules globally via treaties, sanctions, and licensing. AI governance is already emerging (EU AI Act, US executive orders, G7 Hiroshima code), not perfect for sure, but enough to carve out human zones where risks demand it.

These “constraints” assume frictionless markets, perfect AI, and zero politics, which is pure fantasy, honestly. Capitalism thrives on messy coordination and regulatory carve-outs, not theoretical purity. Also, in reality, to move AI anywhere near where it will need to be for the thesis’s predictions, the power consumption issues will need to be addressed, and it seems nobody is talking about that. OpenAI admitted that for them to reach their AI potential as they have promised to their investors, they will need the power equivalent to the power need of 1.9 billionm, that’s billion with a B. Hell, they can’t even get those needed super-chargers for EVs in many towns as the needed power exceeds the local power grids.

Thesis says: The Capture Problem

“The political economy assumption underlying dividend schemes—that AI-owning elites will voluntarily redistribute their rents—contradicts 40 years of evidence showing elite capture of democratic institutions.”

The evidence isn’t perfectly uniform, but it’s far from balanced: elites routinely shape policy to limit redistribution when it matters most. Assuming they’ll voluntarily share AI rents ignores the structural incentives and historical record of capture. Nuance is good, but so is not diluting a well-supported warning.

So now on with my humanistic view of things….

So let me walk you through, and I will try to be as clear as possible.

Advancing a counter-theory: Post-WWII capitalism won’t perish but will reinvent itself after a turbulent transition involving AI and failed UBI experiments. This reinvention stems from humanity’s intrinsic need for purpose, the historical resilience of market-driven systems, and capitalism’s proven ability to lift societies from collapse. We saw this play out during COVID, when people stayed at home, received payments, and simply lost their way. They became depressed, which was in most cases, due to having no real purpose. I write the need for purpose here: https://marksdeepthoughts.ca/2024/05/13/universal-basic-income/

I’ll draw on real-world evidence, including analyses from economic blogs critiquing UBI’s psychological and practical shortcomings, to support this.

Flaw 1: Overestimation of AI’s Total Dominance and Underestimation of Human-AI Symbiosis

The thesis’s core premise (P1: Cognitive Automation Dominance) assumes AI will achieve “cost and performance superiority across cognitive work,” rendering human labour economically irrelevant en masse. This is a hole because it treats AI as an omnipotent, boundary-eroding force without acknowledging its persistent limitations or the emergence of hybrid models.

- AI’s Limitations: History shows technological revolutions create new niches rather than total obsolescence. For instance, the Industrial Revolution displaced artisans but spawned service economies. AI excels in repetitive, data-driven tasks but struggles with nuanced creativity, ethical judgment, interpersonal empathy, and context-dependent decision-making—areas where humans retain advantages. The thesis dismisses “AI-resistant domains” as temporary, but evidence from ongoing AI deployments (e.g., in creative industries like art or journalism) shows humans using AI as tools, not replacements. Tools like spell-check didn’t eliminate writers; they enhanced them.

- Hole in Boundary Collapse Clause: Your claim tasks blur into “total substitution” (e.g., spell-check to decision-making), making “human-only zones” impossible. But this ignores regulatory and market-driven boundaries that persist. For example, in healthcare, AI assists diagnostics, but human oversight is mandated for liability and ethics. Economies can enforce such zones through incentives, not just treaties, think certification programs for “human-crafted” goods, which command premiums in markets valuing authenticity (e.g., organic farming, post-industrial agriculture). A Rolls-Royce retains its prestige because it is “handmade”. Just imagine, if that changed?

Okay, a segway into my thoughts on this: AI will disrupt, but humans’ need for purpose will drive reinvention. When UBI-like systems fail to provide meaning, leading to widespread depression and isolation, as observed during COVID-19 lockdowns, people will seek productive roles. My May 2024 analysis on UBI’s psychological impacts notes that detaching income from work risks eroding purpose, accomplishment, and social ties, potentially exacerbating mental health crises. It references psychologist Jordan Peterson’s view that such systems undermine human sustainability by removing the drive for meaningful contribution. Thus, AI-UBI models collapse not from economic math alone, but from human psychology, paving the way for capitalist resurgence where individuals innovate to fill unmet needs.

Flaw 2: Coordination Impossibility Ignores Historical Precedents

Section 2’s “Coordination Solution” demands perfect, defection-proof international agreements, dismissing them due to the Multiplayer Prisoner’s Dilemma (MPPD) and “definitional incoherence.” This is overly cynical and ahistorical.

- Historical Counterexamples: Coordination has succeeded in complex domains. The Montreal Protocol (1987) phased out ozone-depleting substances despite economic incentives for defection, through enforceable mechanisms and incentives like technology transfers. Trade agreements like the WTO manage “boundary-blurring” issues (e.g., intellectual property in digital goods) via arbitration, not perfect definitions. Even nuclear arms control, which the author contrasts, deals with evolving tech (e.g., cyber nukes) through adaptive treaties.

- Economic Incentives Overlooked: Your thesis assumes defection is inevitable (C3: Coordination Failure), but capitalism thrives on mutual gains. AI-owning elites might support human-preserving policies if mass unemployment tanks consumer demand, echoing Henry Ford’s wage hikes to create buyers for his cars. Jurisdictional arbitrage (e.g., tax havens) exists, but global minimum taxes (OECD’s 2021 agreement) show that enforcement is feasible.

This hole supports capitalism’s adaptability: After an AI-UBI failure, opportunistic actors will exploit collapses for profit, as seen historically. Tribes thousands of years ago engaged in proto-capitalist barter and specialization, trading tools for food to survive scarcity. Ancient civilizations like Mesopotamia formalized markets post-crises, lifting societies from poverty through incentive-driven exchange.

Flaw 3: Dismissal of Redistribution as “Replacement” Oversimplifies Transitions

You redefine “system death” to exclude UBI/dividends, calling them “feudalism with better marketing” (Section 3). This binary ignores gradual evolutions and UBI’s empirical flaws, which actually bolster the case for capitalism’s return.

- UBI’s Practical Failures: The thesis assumes redistribution could sustain consumption, but fails the “productive participation” test. Yet, real experiments reveal deeper issues. A July 2024 critique of the Texas UBI study (funded by Sam Altman) highlights its flaws: it only targeted extreme poverty ($29,000 average income), skewing results toward “responsible” spending on essentials. This isn’t representative of universal UBI, where middle-class recipients might reduce work incentives, leading to broader economic drag. The analysis calls for diverse, longitudinal trials to expose risks like complacency across demographics, implying current evidence overstates UBI’s viability.

- Psychological Hole: This is a big one for me. By ignoring human purpose, the framework misses why UBI fails. The earlier UBI analysis warns of moral hazards: reduced motivation, brain drain from taxes, and loss of employment’s psychological benefits (purpose, interaction). If 50%+ depends on transfers (Section 2’s Democratic Economic Agency), societal malaise ensues, not just economic irrelevance, but a collapse in innovation and resilience. This aligns with my prediction: AI-UBI leads to massive failure, but humans’ innate drive for purpose sparks capitalist reinvention. Entrepreneurs will fill voids (e.g., new services in a post-AI world), profiting from persistent needs like community-building or artisanal experiences.

Flaw 4: Mathematical Constraints Are Not Inescapable

The “Mathematical Constraint” – Oh, I love math – (C1-C3) treats economics as physics, ignoring adaptive variables. Unit cost dominance (C1) assumes static workflows, but markets evolve premiums for human elements (e.g., luxury goods). Competitive-defection (C2) overlooks monopolistic tendencies in AI like Big Tech coalitions. This absolutism echoes failed predictions like Malthusian traps, disproven by innovation.

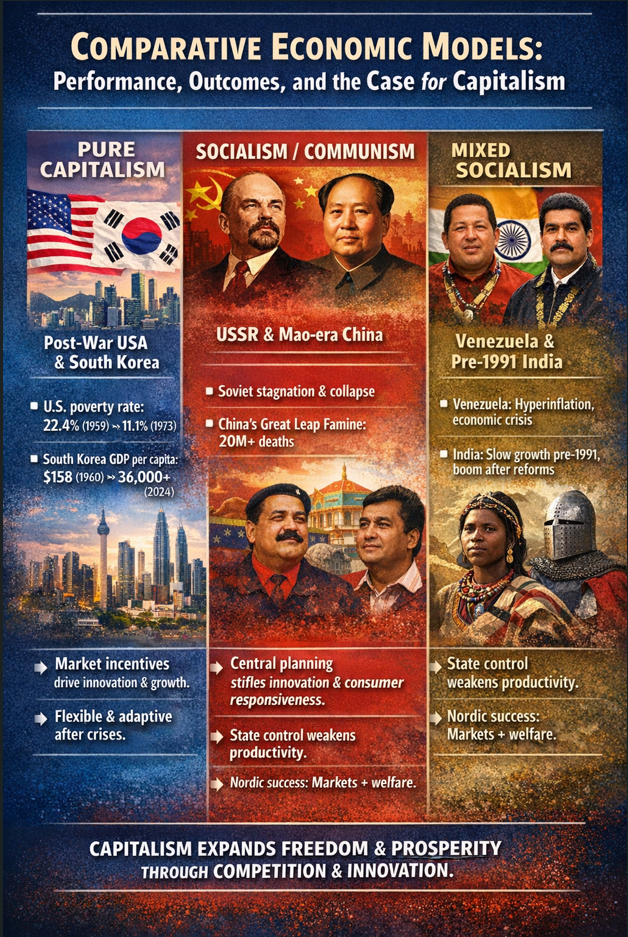

Capitalism’s Outperformance: Historical Comparisons

To underscore capitalism’s resilience, consider how it has repeatedly outperformed alternatives in lifting nations from poverty and adapting post-collapse. Nothing matches pure capitalism’s incentive-driven growth.

These comparisons show capitalism’s edge: It channels human purpose into productive ends, recovering from disruptions like the Great Depression via New Deal reforms blending markets. Socialism often collapses under inefficiency, as in Venezuela’s UBI-like subsidies fueling corruption.

Greed

The Ugly Truth: Greed as Capitalism’s Eternal Driver Let’s address the elephant in the room—the ugly truth about human nature: greed. As Gordon Gekko famously declares in the 1987 film Wall Street (played by Michael Douglas):

“The point is, ladies and gentlemen, that greed, for lack of a better word, is good. Greed is right. Greed works. Greed clarifies, cuts through, and captures the essence of the evolutionary spirit. Greed, in all of its forms; greed for life, for money, for love, knowledge, has marked the upward surge of mankind.”

Loved this movie, but this brings me to a massive reality. This isn’t just movie dialogue, it’s an encapsulation of a fundamental driver of human behaviour. Greed isn’t always noble or pretty, but it is persistent, powerful, and crucially inescapable. Humans, perhaps not all, so I will not generalize here, are wired for self-interest, and greed (often thinly veiled as ambition, innovation, vision, or even “doing what’s right” – Sam Altman) fuels the overwhelming majority of economic activity. We see it everywhere in capitalist systems:

- Tech kazionairs (yes, that’s a word) like Jeff Bezos turned Amazon from a garage bookstore into a trillion-dollar empire through relentless expansion, cost-cutting, and market domination—visionary? Sure. But the engine was an unquenchable hunger for more wealth, more control, more legacy. Mind you, as of this writing, his announcement of a 200 billion investment in AI that seemingly tanked Amazon stocks a few days ago.

- Elon Musk’s empire (Tesla, SpaceX, X) is sold as saving humanity and colonizing Mars, yet it’s powered by the same megalomania that drives billion-dollar bets and brutal work cultures, greed dressed up as destiny. That said, I am a fan of Elon, and I do think he has a pure heart.

- Even in supposedly “altruistic” corners, look at many venture capitalists, politicians, or influencers pushing UBI or “equity” narratives: beneath the rhetoric for the little guy often lies personal enrichment—stock options, book deals, speaking fees, or positioning for the next big power grab.

This isn’t cynicism; it’s observation. Greed doesn’t vanish when technology changes or when redistribution schemes promise to “fix” inequality. If anything, it adapts and thrives. In the context of the Discontinuity Thesis: when AI-driven UBI or dividend systems create mass economic detachment, greed ensures capitalism doesn’t stay dead. Opportunists—whether individuals, startups, or entrenched elites—will exploit every crack in the system. They’ll create black markets, premium human-only niches, new scarcity-driven services, or outright arbitrage opportunities to amass fortunes. Greed doesn’t accept purposeless consumption; it demands more profit, more status, more dominance. Far from dooming the system, this ugly trait is the unbreakable failsafe. As long as humans exist, greed will pull productive, incentive-aligned activity back into the center of the economy. It guarantees that any post-labour “replacement” model will eventually fracture under the pressure of self-interested actors who refuse to sit quietly on handouts. Greed isn’t a bug in capitalism—it’s the feature that ensures its reinvention, no matter how many times the thesis tries to declare it deceased.

Promoting the Reinvention Theory: Capitalism’s Inevitable Return-Yes it will!

The proposed “death certificate” is premature. AI and UBI will indeed cause turmoil, a massive economic reset as flawed redistribution schemes falter under psychological and incentive strains. But humans’ need for purpose ensures UBI’s demise: As the first article warns, it risks becoming a “trap” without ties to contribution, leading to societal decay. Post-collapse, capitalist minds will seize opportunities, filling needs in a purpose-driven economy. This mirrors ancient patterns—tribal specialization for profit-like gains—and modern revivals (e.g., post-Soviet Russia embracing markets).

Where I can push back a tad:

AI isn’t fully unbeatable yet, and we can’t call it a done deal. Right now, even the top AI models (think the latest from OpenAI, Anthropic, Google, or xAI in 2026) don’t completely dominate every kind of thinking work when you factor in real-world headaches. Stuff like needing humans to double-check outputs, dealing with mistakes and made-up facts (hallucinations), slow response times, the huge energy costs for running them, extra work to tweak them for specific jobs, and how they can still get tricked or break under pressure.

Your thesis calls this “mathematical inevitability,” but honestly, it’s still more of a strong prediction than a proven fact. If progress slows down, maybe costs don’t drop as low as expected, or we hit real limits on how smart these systems can get before they plateau, then the whole argument starts to crack. The chain of “AI takes everything, jobs vanish, capitalism dies” isn’t locked in yet.

My second point: Markets already create lasting “human-only” zones, and they could grow bigger

Your thesis says tasks blend so smoothly that you can’t draw a clear line for jobs only humans can do, having no way to enforce it without everything leaking away to cheaper AI. But look at how things already work in that people happily pay way more for “handmade,” “human-created,” “no-AI-involved” stuff. As I have mentioned above, think luxury watches assembled by hand, original art, therapy sessions with a real person, high-end legal advice signed by a human lawyer, or top consulting where clients want the personal touch. These aren’t tiny side-hustles; they command huge price premiums because buyers value the authenticity, trust, and story behind them.

It’s like how “organic” food blew up after industrial farming took over, people started paying crap loads of money for stuff labelled natural and traceable. If we add strict rules around certification, legal liability (you can’t sue an AI the same way you can a human), and even consumer pushback against fully AI-made products, that could carve out a real, stable space for human work. In high-trust areas like healthcare, education, creative fields, or personal services, this price gap might get big enough to support solid middle-class jobs for a lot of people—not just a handful of artisans. It’s not guaranteed to save everything, but it’s a real market force that your thesis may be brushing off too quickly as temporary.

Then there is this….

The “Warm Body” Problem: You Can’t Sue a GPU (And That’s a Game-Changer)

Here’s something your thesis sort of glosses over completely. At the end of the day, when AI screws up big time, and it will for sure, hopefully nobody dies, it will for sure if not already provide bad medical diagnosis, wrongful denial of a loan, faulty legal advice, or even a self-driving car crash (Sorry Elon), society and the law demand someone real to hold accountable. You can’t drag a server rack into court, make a neural network pay damages, or throw a GPU in jail, I usually hit them with a bloody hammer, but that’s another story for another day.

AI has no legal I will call “personhood.” It can’t form intent, sign contracts, or be punished. Every major regulation and court trend in 2026 still pins ultimate responsibility on humans: the developers who built it, the companies that deployed it, or the professionals who used or signed off on its outputs.

Look at the rules already in play. The EU AI Act (fully rolling out for high-risk systems by mid-2026) requires “appropriate human oversight” for anything touching health, safety, employment, credit, or critical infrastructure. That means designing AI so real people can monitor it, intervene, override decisions, and take final responsibility. Deployers have to assign trained humans to watch the system, report incidents, and ensure it doesn’t go off the rails. In the US, states like Colorado and others are pushing similar “reasonable care” duties for high-risk AI, with liability landing on companies if they don’t mitigate risks.

Hell, even GDPR Article 22 gives people the right to human intervention on automated decisions that affect jobs, credit, or big life choices—no fully autonomous “black box” allowed without safeguards.

This isn’t optional, it’s baked into negligence law, professional standards, and liability frameworks. If an AI hallucinates bad advice or discriminates (misgenders, OMFG!), the lawyer, doctor, or exec whose name is on it gets sued, not the model.

And courts and regulators won’t let that slide; they need a “neck to wring” to deter recklessness and compensate victims. That forces massive, ongoing human involvement with things like compliance teams, risk auditors, ethical reviewers, safety officers, and domain experts (SMEs) who govern swarms of AI bots (MoltBook?) doing the heavy lifting hundreds of times faster.

Think about it: AI might crunch data or draft reports at warp speed, but humans stay in the loop to verify, explain, contest, and they must own the outcome. In law firms, hospitals, banks, and factories, this could mean thousands of oversight roles, not replacing workers, but shifting them to higher-level governance. It’s a built-in market and legal demand for human judgment where trust, blame, and real consequences matter. Your thesis assumes cost pressure wipes out every human task, but it doesn’t explain how you arbitrage away the legal requirement for an accountable warm body.

This isn’t wishful thinking, thank god, it’s how the world actually works right now. Humans (hopefully) refuse to hand over final power when the stakes are high enough to ruin lives or bankrupt companies. That stubborn legal reality could preserve far more productive human participation than the framework predicts.

Final Word: In the long term, capitalism must survive because it aligns with human nature: trading, innovating, and profiting from scarcity. Short-term disruptions? Yes. Ultimate reinvention? Absolutely…..it always has.

Leave a comment